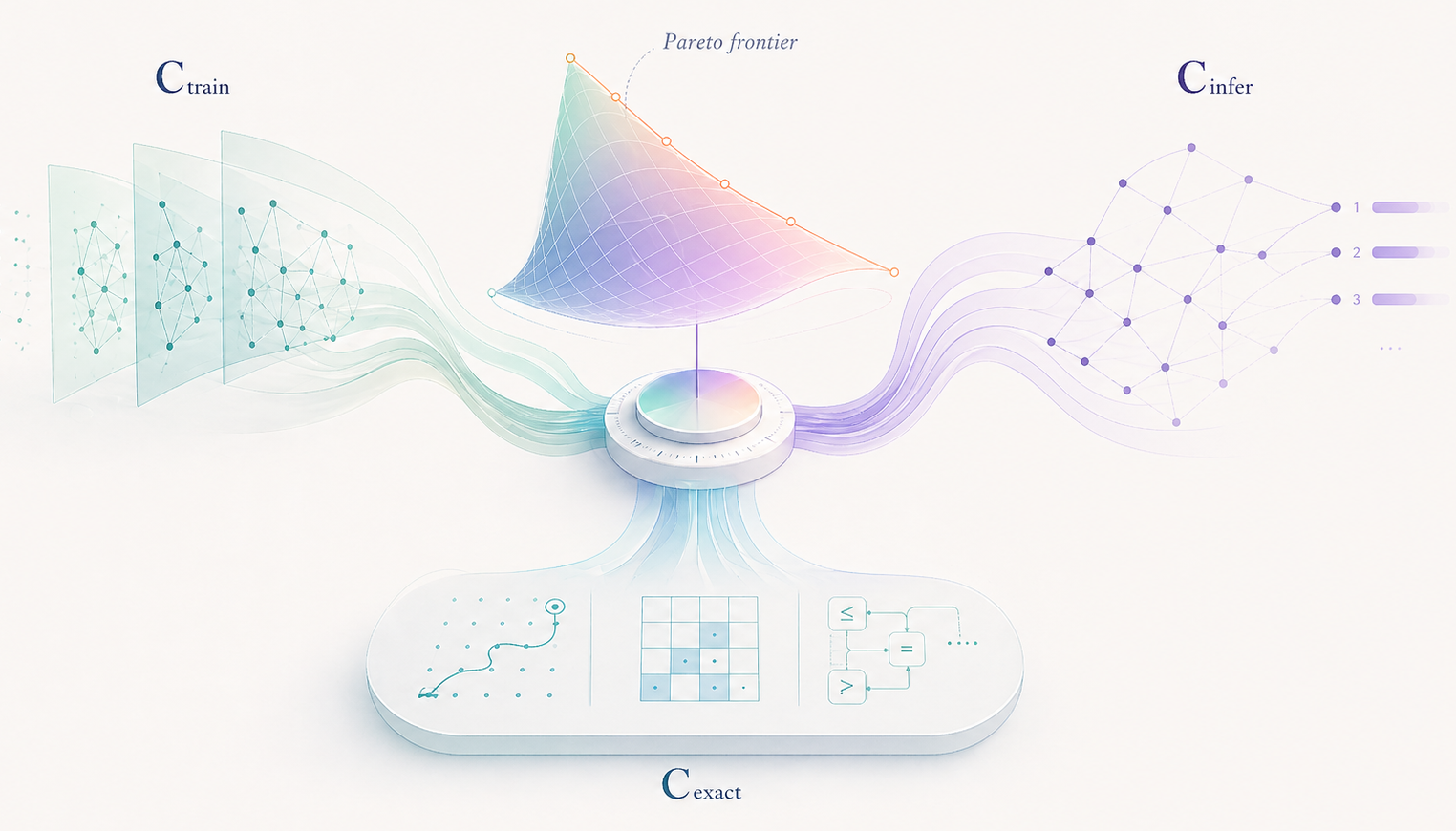

How should compute be allocated across these three components? I propose a very strong conjecture.

Master Conjecture: For every fixed problem class \(\mathcal{P}\), the optimal allocation of the total compute budget \(C\) is fixed ratio allocation

\(C_{train}^{*}, C_{infer}^{*}, C_{exact}^{*} = \alpha_{\mathcal{P}}C, \beta_{\mathcal{P}}C, \gamma_{\mathcal{P}}C\)

where \(\alpha_{\mathcal{P}} + \beta_{\mathcal{P}} + \gamma_{\mathcal{P}} = 1\) are constants dependent only on the problem class \(\mathcal{P}\).

In my PhD Thesis "Machine Learning-based Search in Combinatorial Optimization" I prove Master Conjecture for a couple of tree search models. These models are very simplified, and it might be true that the optimal allocation has a different behavior depending on the problem class \(\mathcal{P}\) and the power of exact algorithms in this class. In some cases it may be true that \(C_{exact}\) scales as \(O(C)\) but in others only as \(O(C / \log C)\) or even \(O(\log C)\). Nevertheless it is interesting that empirical experiments in combinatorial games like Hex and Chess show the same form of scaling laws.

Example 1: Chess AI Engine

Public numerical data on Chess Engines like Stockfish lets us determine the optimal allocation:

\(C_{train} : C_{infer} : C_{exact} \approx 18\% : 80\% : 2\%\)

This data is based on public Stockfish reports that imply \(\approx 26\) ELO gain from doubling of train compute (as described in SFNNv6), \(\approx 110\) ELO gain from doubling of inference-time compute (obtained from data on 20% time odds ELO change), and \(\approx 3\) ELO gain from doubling of exact compute (Syzygy tablebases). It follows that the optimal ratios can be computed by maximizing the ELO:

\(\mathrm{Maximize\;\;} E(C) \approx 25 \log_2 C_{train} + 110 \log_2 C_{infer} + 3 \log_2 C_{exact}\)

\(\mathrm{s.t.\;\;\;} C_{train} + C_{infer} + C_{exact} = C\)

Note that training happens once, while test-time inference and exact algorithms are used every game. Obviously, people don't allocate 4 times more compute on a single game inference than on training. If you know the number of games \(N\) in advance, it is easy to find that the optimal allocation budgets change to \((18N\% : 80\% : 2\%)\). Note that in scientific discovery applications, the inference may happen only a couple of times.

Example 2: AI for Science, AlphaTensor, AlphaEvolve

DeepMind has built many AI systems for search and discovery: AlphaZero, AlphaFold, AlphaTensor6, AlphaEvolve7, AlphaProof8. Some of their results were later improved9 by adding classical CS algorithms and search techniques. Here I provide a table that shows compute allocation in these systems, highlights potential improvements in design, and show how \(C_{exact}\) naturally puts everything in place.

| System | Allocation Structure | Possible Improvements |

|---|---|---|

| AlphaTensor | Large \(C_{train}\) and \(C_{infer}\) for Neural Network training and large scale tree search. Near zero \(C_{exact}\), only reported for data augmentation and permutations. | Combine tensors and expressions proposed by Neural Network with exact classical algorithms such as MILP solvers and flip graph search. |

| AlphaEvolve | Large \(C_{train}\) for LLM pre-training, post-training, RL. Large \(C_{infer}\) for reasoning models inference. Task-dependent \(C_{exact}\) budget. | Initially FunSearch was used to sample programs that generate solution. With this design, \(C_{exact}\) would only be used for program evaluation and hence be sub-optimal according to our proposed scaling. Instead, in AlphaEvolve DeepMind introduced a new kind of \(C_{exact}\) scaling - they sample search algorithms, which are then executed with significant compute budget. |

| AlphaProof | Large \(C_{train}\) for training. Large \(C_{infer}\) for reasoning models and tree search. Task-dependent \(C_{exact}\) for compilation with Lean kernel, tactics execution. | Latest Lean Coding agents by Harmonic and AxiomMath likely include significantly more \(C_{exact}\) budget for: fixing Lean compilation errors, decomposing into Lemmas, and stronger compilation engines. |

Connections to Other Topics

The idea of \(C_{exact}\) compute allocation is related to many other ongoing discussions and phenomena in AI:

- François Chollet ideas on Program Synthesis and Neurosymbolic AI;

- Sebastian Pokutta blog "Not every discovery needs an LLM"10;

- Claude Code leak showed reliance on many exact string matching and context processing algorithms;

- Whenever frontier AI labs report math benchmarks, the numbers with tools and without tools differ very much. This is can be viewed as gains from \(C_{exact}\).

Notes

- Claude E. Shannon, Programming a computer for playing chess, Philosophical Magazine, 1950.

- David Silver et al., Mastering the game of Go with deep neural networks and tree search, Nature, 2016. David Silver et al., Mastering Chess and Shogi by Self-Play with a General Reinforcement Learning Algorithm, arXiv, 2017.

- Noam Brown and Tuomas Sandholm, Superhuman AI for heads-up no-limit poker: Libratus beats top professionals, Science, 2018.

- Charlie Snell et al., Scaling LLM Test-Time Compute Optimally can be More Effective than Scaling Model Parameters, arXiv, 2024. Andy L. Jones, Scaling Scaling Laws with Board Games, arXiv, 2021.

- Michael P. Brenner, Vincent Cohen-Addad, and David P. Woodruff, Solving an Open Problem in Theoretical Physics using AI-Assisted Discovery, arXiv, 2026.

- Alhussein Fawzi et al., Discovering faster matrix multiplication algorithms with reinforcement learning, Nature, 2022.

- Alexander Novikov et al., AlphaEvolve: A coding agent for scientific and algorithmic discovery, arXiv, 2025. Google DeepMind, AlphaEvolve: A Gemini-powered coding agent for designing advanced algorithms, May 14, 2025.

- Thomas Hubert et al., Olympiad-level formal mathematical reasoning with reinforcement learning, Nature, 2026. Google DeepMind, AI achieves silver-medal standard solving International Mathematical Olympiad problems, July 25, 2024.

- Timo Berthold, FICO Xpress Optimization Surpasses AlphaEvolve's Achievements, FICO Blog, June 2025. Manuel Kauers and Jakob Moosbauer, The FBHHRBNRSSSHK-Algorithm for Multiplication in \(\mathbb{Z}_2^{5\times5}\) is still not the end of the story, arXiv, 2022.

- Sebastian Pokutta, Not every discovery needs an LLM, blog post.